Research Methods I

The 10,000ft Picture

The Essential Components

Notes from class are organized around these questions as much as possible.

- Why study? — Motivation(s)

- What to study? — The Research Question(s)

- How to study? — The Research Design

- Whom to study? Where do you study them? — Subjects

- What can be captured? — Variables

- What did you discover? — Findings

- What do your findings mean/imply? How confident are you in your findings? — Analysis

1. What to Study? — The Research Question(s)

1.2 Identifying a Research Focus

- Defining a general area of interest

- Identifying specific research questions

- Operationalizing questions (connecting to methods)

- Exploratory vs. hypothesis-testing vs. theory-testing goals

- Informatics scope

- Clinician-focused: communication, information needs, errors, decision-making, adherence to guidelines

- Patient-focused: self-management, engagement, health communities

- Intervention types: decision support, communication tools, summarizers

1.4 Role of Theory in Shaping Questions

- Theory provides direction, concepts, constructs, and variables

- Choice of framework defines what you look for and how you interpret it

- Different theories yield different lenses on the same empirical findings

- Example: diabetes self-management viewed through decision-making vs. problem-solving vs. sensemaking

- Atheoretical research: description, classification, prediction, grounded theory (developing theory inductively)

1.5 Key Theories and Frameworks

Distributed Cognition (Hutchins)

- Unit of analysis: socio-technical system

- Major construct: propagation of representations through representational media

- Cognitive artifacts; transforming cognitive tasks into perceptual tasks

- Application to clinical settings: data flow from monitoring devices → EHR → summaries → verbal presentation → notes → orders

Activity Theory

- Unit of analysis: a meaningful activity

- Components: subjects (actors), objects (objectives), community (social context)

- Relationships: rules, division of labor, mediating artifacts

Situation Awareness (Endsley)

- Perception → comprehension → projection

- Applied in dynamic systems (aviation, clinical care)

Donabedian’s Quality of Care

- Structure → process → outcome

Technology Acceptance Model (Davis)

- Perceived usefulness, perceived ease of use → behavioral intention → actual use

Theory of Reasoned Action / Planned Behavior (Ajzen & Fishbein)

- Attitudes, subjective norms, perceived behavioral control → intention → behavior

DeLone & McLean IS Success Model

- System quality, information quality, service quality → intention to use / use → user satisfaction → net benefits

CSCW Framework (Pratt et al.)

- Collaborative use of IT systems or collaboration through IT

- Levels of analysis: political, institutional, large group, small group

- Key concepts: incentive structures, workflow (routine vs. exception), awareness (focus, nimbus)

Coiera’s Communication-Conversation Model

- Communication–information task continuum

- Common ground, grounding (solid ground vs. shifting ground)

RE-AIM (Bakken & Ruland)

- Reach — proportion and representativeness of participants

- Effectiveness — impact on outcomes, including unintended effects and cost

- Adoption — proportion and representativeness of settings/agents willing to initiate

- Implementation — fidelity to protocol, consistency of delivery, cost

- Maintenance — long-term effects (individual) and institutionalization (setting)

1.6 Framework Building Blocks

- Concepts: terms that abstractly define objects, phenomena, or ideas (directly observable or agreed-upon)

- Constructs: concepts that cannot be directly observed (e.g., emotional response, satisfaction)

- Variables: operationalized constructs — measurable

- Relational statements: direction, shape, strength, symmetry, sequencing, probability, necessity, sufficiency

- Conceptual models: sets of highly abstract, related constructs; express assumptions and philosophical stance

- Theories: narrow, testable conceptual models — describe, explain, predict, or control phenomena

- Conceptual maps: diagrams of interrelationships among concepts and statements

- Concept synthesis, concept derivation, concept analysis

1.7 Literature Review as Question Refinement

Purpose

- Situating research in existing knowledge

- Identifying gaps and opportunities

- Clarifying contributions to knowledge

- Identifying relevant frameworks and methods

Searching the Literature

- Identify main concepts/keywords (research topics and methods)

- Develop a search strategy

- Select databases (cast a wide net — not just PubMed)

- Systematically record references (tools: Zotero)

Levels of Reading

- Skimming (titles, refine search, identify clusters)

- Comprehending (reading individual papers)

- Analyzing (writing summaries)

- Synthesizing (summarizing body of work, identifying gaps)

Reviewing Individual Papers

- Comprehend: identify problem, rationale, objectives, variables, design, sample, measurement, analysis, interpretation

- Assess: compare to ideal research process; identify strengths and weaknesses

- Analyze: examine logical links, consistency of implementation with goals, inferences

- Evaluate: determine meaning, significance, and validity

- Cluster: synthesize findings across papers; relate to body of knowledge

Synthesizing Research Evidence

- Systematic review — comprehensive search, explicit selection criteria, data synthesis

- Meta-analysis — pooling results from multiple studies, computing effect size

- Integrative review — synthesis across studies including qualitative; result is narrative

- Metasummary — qualitative; summing findings across reports

- Metasynthesis — qualitative; using original studies and metasummaries to produce synthesis

- PRISMA framework: planning → conducting → reporting

1.8 From Questions to Hypotheses

- Theoretical hypothesis → testable/statistical hypothesis

- Properties of a good hypothesis

- Grounded in existing theory/knowledge

- Testable

- Simple and specific (one predictor, one outcome)

- Stated in advance

- Falsifiable

- Hypothesis-generating studies: when relationships are unclear and you need data to form a hypothesis

1.9 Philosophy of Science

Historical Development

- Antiquity: Plato (idealism, reasoning from ideas, universal forms) vs. Aristotle (realism, observation, classification, syllogism)

- Middle Ages: dominance of theology; rediscovery of Aristotle; attempts at reconciliation (Aquinas, Roger Bacon)

- Renaissance: Copernicus and the heliocentric model; observation-based theory; printing press and dissemination; scientific method

- Enlightenment

- Descartes: rationalism, skepticism, “I think therefore I am”

- Bacon: empiricism, inductive reasoning from fact → axiom → law

- Newton: mathematical description, combining deduction and induction, hypothesis testing

- Hume: problem of induction, Hume’s fork (knowledge of ideas vs. knowledge of facts)

- Kant: a priori knowledge, ontology vs. epistemology, mental representations of reality

- Modern Period

- Hegel: idealism, dialectical method, role of history in shaping knowledge

- Comte: positivism — knowledge based purely on facts and logic; mathematics as superior science

- Dilthey: split between natural and social science; interpretivist position

- Darwin: theory of evolution, parsimony (simplest explanation)

- Early 20th Century

- Revolution in physics (relativity, quantum mechanics)

- Vienna Circle: logical positivism, verifiability criterion, reductionism

- Phenomenology: Husserl (experiential point of view), Heidegger (existential phenomenology, ready-to-hand / present-at-hand)

- Ethics in science: Manhattan Project → Nuremberg trials → Nuremberg Code

- Late Modern Period

- Postmodernism: science as social construction, facts as social constructs, science as discourse

- Existentialism (Sartre): consciousness, freedom of choice

- Postmodern critique: Latour (laboratory life), Kuntz (assault on objective knowledge erodes trust)

Key Philosophical Concepts

- Idealism vs. realism

- Inductive vs. deductive reasoning

- Falsifiability and demarcation (Popper) — black swan example

- Paradigm shifts (Kuhn) — normal science vs. scientific revolutions

- Epistemological anarchy (Feyerabend) — “anything goes”

- Communicative rationality (Habermas) — rationality situated in communication

- Scientific realism (van Fraassen, McMullin, Boyd) — theories as historical process toward truth

Computational Philosophy of Science

- Herbert Simon: bounded rationality, satisficing, cognition as computation, scientific discovery as problem-solving

- Big data challenges: samples → populations, experiment vs. observation, statistical crisis (“when everything is significant”), role of theory, ethics of data (boyd & Crawford — inequality, power, class)

- AI: intelligence, utility vs. general intelligence, role of humans and informaticians

1.10 Writing the Proposal (Question-Facing Sections)

Specific Aims (1 page)

- Opening paragraph: opportunity, challenge, status, environmental change

- What you are going to do (based on research question)

- Numbered aims with accompanying hypotheses

Significance

- Why is the question important? Who benefits? What answers will the study provide?

- Organize: why is the problem a problem → what has been done → challenges → what else is warranted → what needs to be known now

Innovation

- New ideas, new models, new applications

- How the proposal challenges or shifts current paradigms

Funding Landscape

- Government: NIH (NLM), NSF, AHRQ, PCORI

- Foundations: RFAs, general or by invitation

- Corporate support

- Intramural support (pilot studies, $20–40K)

- NIH proposal types: R21 (exploratory), R01 (main grant), R-18 (translational), K awards (career development)

- RFA vs. unsolicited

Review Criteria

- Significance, innovation, approach, investigator, environment

- Common reasons for rejection: ill-defined objectives, wrong scope, lack of integration, idea already tried, poor approach

2. How to Study? — The Research Design

2.1 Major Design Distinctions

- Qualitative vs. quantitative data

- Observational vs. experimental

- Prospective vs. retrospective

- Measurement study vs. demonstration study

- Levels of authority: expert opinion → observation → experiment

2.2 Qualitative Research Designs

When to Use

- Exploratory research: identify and refine questions, understand opportunities for innovation

- Understanding complex work practices in complex contexts (organization, culture, personal motivations)

- Evaluating why informatics innovations are used or not used

Historical Roots

- Anthropology: armchair anthropology → fieldwork (Malinowski, Boas, Mead)

- Sociology: Chicago school (Park — urban poor, reform; Hughes — non-dispossessed, medical/police)

Data Collection Methods

Observations

- Participant vs. non-participant observation

- What to record: jotting notes during observations, expanding within 2 hours

- Start broad, gradually focus, periodically re-examine

- Challenges: missing critical stakeholders, keeping observations too broad or narrowing too quickly, sparse notes, going native, Hawthorne effect

Interviews

- Unstructured/ethnographic: no guide, conversation form, chain of associations; used very early in research

- Semi-structured: interview guide with broad areas and probing questions; guide is flexible, not prescriptive

- The grand tour question: sets tone, easy to answer, not yes/no

- Master-apprentice model vs. interviewer-interviewee model

- Probing: “tell me more,” “why do you say that,” echo technique, silence

- Avoid: leading questions, abstract questions, summarizing (that’s the researcher’s job)

- Challenges: quiet interviewees, politically charged topics, emotional subjects

Surveys

- Reaching wide audiences, quantifying and extending qualitative findings

- Not good for discovery — better after initial qualitative work

- Question design: avoid ambiguity, avoid leading questions

- Always pilot

Artifacts

- Hand-written notes, forms, guidelines, reference materials, pictures

- Often discarded at end of shift — ask to collect them

Qualitative Data Analysis

General Process

- Convert all data to text → identify major themes → provide illustrative case studies

- Analysis begins before data collection and continues through writing

- Three common elements: data reduction, data organization, data explanation/verification

Grounded Theory (Glaser & Strauss)

- Goal: develop a theory from qualitative data

- Theoretical sensitivity: reviewing existing theories to focus investigation

- Open coding: labeling phenomena, discovering categories, developing properties and dimensions

- Axial coding: identifying causal conditions, intervening conditions, action/interaction strategies, consequences for each category

- Selective coding: selecting core category, explicating story line, relating other categories to core, validating

Thematic Analysis (Braun & Clarke)

- Similar to grounded theory but without theoretical commitment

- Focus on identifying recurrent themes — patterns of meaning

- Themes are synthesized by researchers (they do not “emerge”)

- Six steps: familiarizing → generating initial codes → searching for themes → reviewing themes → defining/naming themes → producing report

- Focus: broad overview vs. focused examination

- Approach: inductive (data → themes) vs. deductive (theory → data categories)

- Level: semantic (descriptive) vs. latent (underlying ideas, interpretive)

- Prevalence: not about frequency; about significance in relation to research question

Writing Qualitative Results

- Present main findings (themes or overarching theory)

- Illustrate with quotes — balance quotes and interpretations

- Quotes are for illustration, not replacement of analytic narrative

- Forms of presentation: narrative/thick description, conceptual framework

Tools

- Low-tech: hand-written comments, printed transcripts, affinity diagrams with posted notes

- High-tech: Excel, NVivo

2.3 Quantitative Observational Designs

Cross-Sectional Studies

- Single point in time; all measurements within a short period

- Prevalence, not incidence

- Best for measuring associations; cannot establish causation

- Strengths: fast, inexpensive, no loss to follow-up

- Weaknesses: cannot establish causal relationships, limited for rare diseases

Cohort Studies

Prospective Cohort

- Subjects selected based on exposure; followed over time

- Assesses incidence; investigates potential causes

- Measures variables more completely than retrospective

- Weaknesses: expensive, inefficient for rare outcomes, cannot assume causality

Retrospective Cohort

- Assembly, baseline measurements, and follow-up already happened

- Uses existing data; inexpensive

- Weaknesses: limited control over sampling and data quality

Multiple/Double Cohort

- Separate cohorts with different exposure levels

- May be only feasible approach for rare exposures (occupational/environmental hazards)

- Weakness: cohorts from different populations — increased confounding

Case-Control Studies

- Subjects recruited based on outcome (dependent variable)

- Retrospective; predictor variables measured among groups

- Very efficient for rare outcomes; short duration, small sample

- Weaknesses: sampling bias, retrospective measurement, limited to one outcome

- Control selection strategies: hospital/clinic-based, population-based, matching, multiple control groups

Nested Designs

Nested Case-Control

- Cases drawn from a predefined cohort

- Avoids biases of drawing cases and controls from different populations

- Useful for expensive predictor measurements on archived specimens/records

Nested Case-Cohort

- Controls are random sample of entire cohort regardless of outcome

- Can estimate incidence and prevalence

- Reusable comparison group for multiple outcomes

Case-Crossover

- Each case serves as its own control

- Compares exposures at time of outcome vs. other time periods

- Useful for short-term effects of intermittent exposures

2.4 Types of Demonstration Studies

- Descriptive — estimate dependent variables

- Comparative — compare performance

- Correlational — effect of independent on dependent variable without manipulation

2.5 Design of Informatics Interventions

What Is Design

- “The ability to imagine that-which-does-not-yet-exist, and to make it appear in concrete form” (Nelson & Stolterman)

- “Making decisions, often in the face of uncertainty” (Zinter)

- Initial state → desired state transformation (Doblin’s basal model)

- Challenge: predicting future states; unintended consequences

The Design Process

- Discover (what is?) → Ideate (what if?) → Embodiment (what wows?) → Development (what works?) → Evaluation

- Also described as: analysis → synthesis → evaluation (Jones)

Contextual Design (Discover Phase)

- Using collected data to develop conceptual account of work

- Synergy between problems and solutions

Work Models

- Flow model: communication, coordination, roles, groups, information flow, artifacts, breakdowns

- Sequence model: intent, trigger, steps, orders/loops/branches, breakdowns

- Artifact model: information, parts, structure, annotations, presentation, usage, breakdowns

- Cultural model: influencers, extent of effect, direction of influence, breakdowns

- Physical model: places, structures, tools, artifacts, layout, breakdowns

Process

- Interpretation sessions (interviewer, work modelers, recorder)

- Consolidation: comparing individual models, identifying patterns

- Affinity diagrams: organizing individual notes into hierarchies of common issues

- Work redesign: identify good practices, inefficiencies, constraints; redesign roles, sequences, automation

- Sharing with stakeholders (member checks)

Ideation Techniques

- Analogical thinking, brainstorming, attribute listing, case-based reasoning, forced connections

- IDEO cards, lateral thinking, morphological analysis

- SCAMPER (substitute, combine, adapt, modify, put to other purposes, eliminate, rearrange)

- SIT (unification, multiplication, division, breaking symmetry, object removal)

- Synectics, TRIZ, Whack Pack

Design Embodiments

- User stories/scenarios: plain language descriptions of interaction; goals, expectations, actions, reactions

- Storyboards: comic-strip narratives of key interaction moments

- Prototypes/mockups: low fidelity (paper, simple interactive) → medium/high fidelity; low cost = low barrier to change

- Wireframes: structural layout without visual design

- Wizard of Oz: human operator behind the curtain simulates complex functionality

Human vs. Computer Capabilities

- People: creative tasks, open-ended tasks, pattern recognition, ambiguity, context, emotion, physical senses

- Computers: perfect memory, fast calculations, large-scale data processing, consistency, non-destructive editing

2.6 Evaluation Methods

Design Critiques

- Informal meeting to critique current designs (3–7 people)

- Goals: compare approaches, discuss user flow, explore alternatives, get cross-functional feedback

- Rules: clarifying questions first, listen before speaking, explore alternatives, be gentle, avoid absolutes, speak from your point of view

Heuristic Evaluation (Nielsen & Molich)

- Trained analysts critique system against recognized usability heuristics

- Two passes: first to familiarize, second to examine elements

- 10 Heuristics: visibility of system status, match between system and real world, user control and freedom, consistency and standards, error prevention, recognition rather than recall, flexibility and efficiency, aesthetic and minimalist design, help users recover from errors, help and documentation

- Severity ratings: 0 (not a problem) → 4 (usability catastrophe)

- Multiple evaluators find more problems (convergence curve)

Cognitive Walkthrough

- Goal/task-specific evaluation of cognitive processes needed to complete tasks

- Preparation: representative tasks, user population, context, action sequences, initial goals

- For each step: will user try to achieve this effect? Will user notice correct action? Will user understand how to achieve subtask? Does user get feedback?

- More preparation than heuristic evaluation; more explicit goal/task structure

Usability Testing

- Task-based evaluation with potential users in semi-controlled settings

- Think-aloud technique: users verbalize thought process

- Observer role: neutral (probing questions, no assistance) or active participant

- Instructions: stress that it tests the system, not the user; no wrong answers

- Scorecards: task, problems, severity

- Software tools: Morae (screen capture, webcam, event logging)

Field/Feasibility Evaluation

- During pilot deployment in limited settings

- Researcher-driven (analyst present, can observe and ask questions) vs. remote (built-in probes)

- Hybrid of lab and ethnographic methods

2.7 Writing the Proposal (Approach Section)

- Preliminary studies: current setting, investigator qualifications, relevant prior work

- Framework definition

- Detailed plan per aim: development, implementation, evaluation

- Evaluation: research questions, research design, measurements, statistical tests, confirmatory vs. exploratory hypotheses, power calculations

- Privacy and security: data sources, recruitment, IRB, HIPAA, data storage/transfer

- Risk mitigation strategies and limitations

- Dissemination plan (papers, other methods, future proposals)

- Timeline

3. Whom to Study? — Subjects

3.1 From Population to Sample

- Population → target population → accessible population → sample → subject

- Defining eligibility criteria (inclusion/exclusion)

- Broad criteria → heterogeneous sample; narrow criteria → homogeneous sample

- Representativeness: demonstrated with comparisons to population parameters

3.2 Random Sampling Methods

- Simple random sampling: random subset of a population

- Stratified random sampling: sampling within sub-populations (strata) based on characteristic of interest

- Cluster sampling: sampling naturally occurring clusters (e.g., hospital wards, clinics)

- Systematic sampling: used when ordered list of population is available

3.3 Nonrandom Sampling Methods

- Convenience sampling: meet eligibility criteria and easily accessed

- Consecutive sampling: subjects recruited one after another

- Purposive sampling: selected for specific qualities (qualitative)

- Network/snowball sampling: participants refer others (qualitative)

- Theoretical sampling: used in grounded theory; sampling driven by emerging theory

3.4 Qualitative Study Participants

- Identifying stakeholders and gatekeepers

- Securing cooperation and building rapport

- Users vs. stakeholders distinction

- How to introduce the study (framing matters)

- Consenting all participants in advance

3.5 Sampling Error

- Random variation: impacts precision; solution is increasing sample size

- Systematic variation/selection bias: impacts accuracy; related to non-random sampling, refusal rates, exclusion criteria

3.6 Central Limit Theorem

- Sampling distribution of means approaches normal distribution as N increases (regardless of underlying distribution)

- Mean of sampling distribution approaches population mean; variance decreases with sample size

- Implication: can infer from a single sample to the population given sufficient N

3.7 What Can Go Wrong

- Accidental over-recruiting within a particular characteristic

- Non-response bias

- Insufficient number of participants

3.8 Ethics of Human Subjects Research

- Subjects: clinicians, patients, non-clinical caregivers, communities

- Inclusion/exclusion criteria

- Privacy, informed consent, IRB approval

- Nuremberg Code (first formulation of ethical conduct principles)

- HIPAA

- Do no harm

4. What Can Be Captured? — Variables

4.1 From Questions to Variables

- Conceptualization: defining main concepts — clear, precise definitions of what is included and excluded

- Operationalization: identifying indicators of selected concepts; how they will be observed and measured

4.2 Types of Observability

- Direct observables: can be captured by direct observation (e.g., number of information exchanges during rounds)

- Indirect observables: can be captured through indirect means (e.g., number of informal exchanges outside rounds)

- Constructs: cannot be captured directly or indirectly; require proxy measures or scales (e.g., satisfaction, quality of teamwork)

4.3 Variable Roles

- Independent/predictor variables: what you are manipulating or classifying by in the study

- Dependent/outcome variables: what you are measuring as the result

- Confounding variables: extraneous variables not manipulated but potentially impacting the outcome

4.4 Data Formats

- Nominal, categorical, ordinal, interval, continuous

- Qualitative data: observations, interviews, artifacts, photographs

- Quantitative data: measurable, statistics-based

4.5 Measurement

- Measurement studies: assess how accurately an attribute can be measured (error estimation)

- Measurement accuracy determines how accurate the demonstration study will be

- Use of standardized or well-accepted measures/scales increases validity

- Choosing the right measure as a predictor of the phenomenon of interest

4.6 Qualitative Data Forms

- Field notes from observations (jotted notes expanded into narratives)

- Interview transcripts or notes

- Artifacts collected from the field (hand-written notes, forms, guidelines)

- Photographs and sketches of physical spaces

- Audio/video recordings

5. What Did You Discover? — Findings

5.1 Descriptive Statistics

- Measures of central tendency: mean, median

- Point estimates and interval estimates (confidence intervals)

- Frequency of occurrence

- Prevalence (cross-sectional) vs. incidence (cohort)

5.2 Quantitative Outcome Measures

- Risk: probability of experiencing an outcome given exposure (N with outcome / N exposed)

- Odds: likelihood of outcome compared to no outcome (N with outcome / N without outcome)

- Rates: events accumulated over time (N with outcome / person-time exposed)

- Classic epidemiology table (2×2: exposed/not exposed × positive/negative outcome)

5.3 Qualitative Findings

- Themes from thematic analysis (prevalence, significance relative to research question)

- Core category and theory from grounded theory analysis

- Illustrative quotes balanced with analytic narrative

- Work models from contextual design (flow, sequence, artifact, cultural, physical)

- Affinity diagrams synthesizing key observations into hierarchies

- Thick description and narrative accounts

5.4 Translational Research Stages

- T1: laboratory to clinical practice — bench to bedside (case studies, Phase 1–2 clinical trials)

- T2: clinical studies to populations — research to practice (observational studies, Phase 3–4 trials)

- T3: general populations to general practice — guidelines to practice (dissemination and implementation research)

- T4: application to real-world outcomes — practice to impact (policy research)

5.5 Clinical Trial Phases

- Phase 0: exploratory, first-in-human

- Phase 1: safety, tolerability, actual action

- Phase 2: efficacy

- Phase 3: multi-center, effectiveness

- Phase 4: post-marketing surveillance

6. What Does It All Mean? — Analysis (Confidence in Findings)

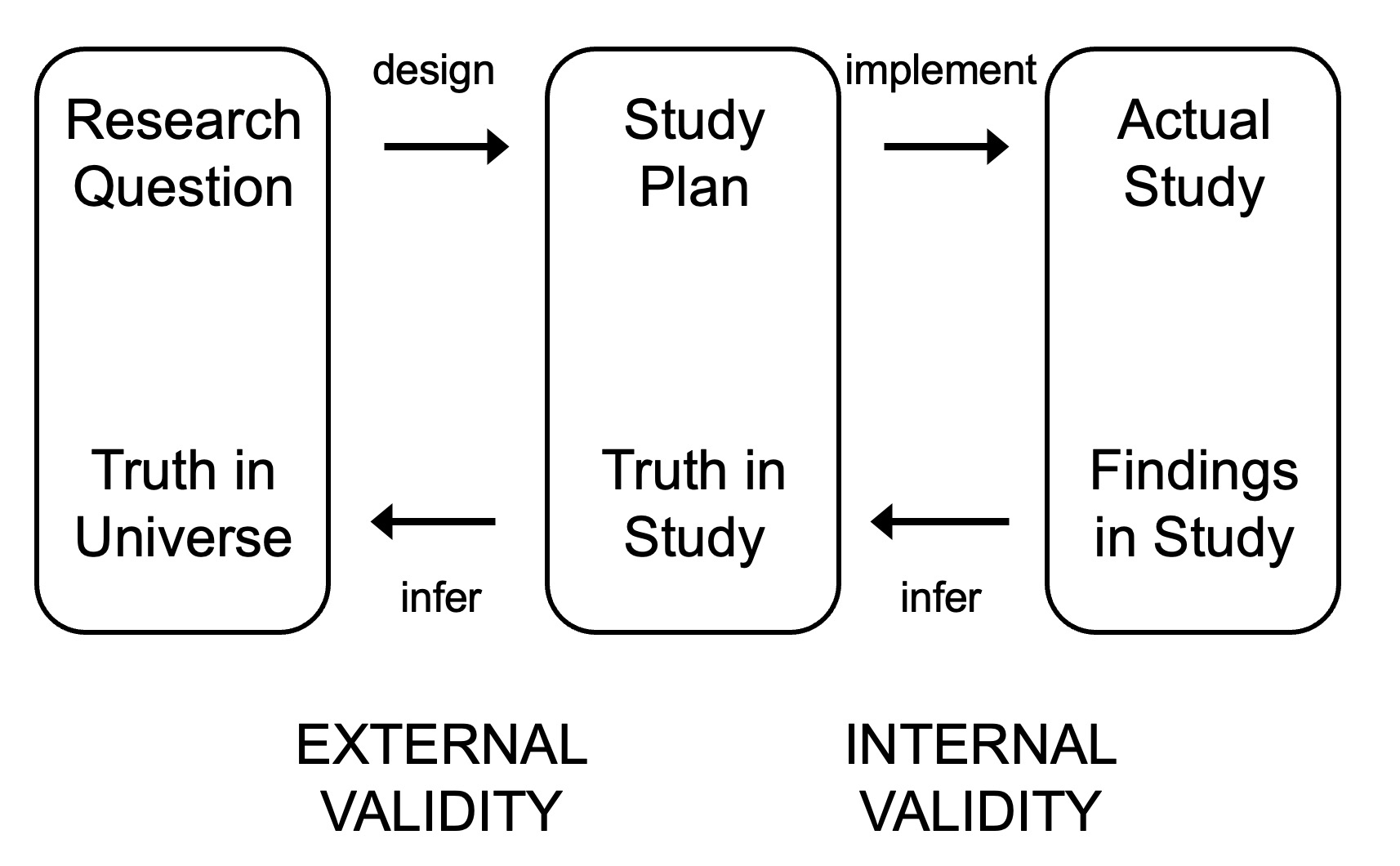

6.1 The Inference Model

- Research question → truth in universe

- Study plan → truth in study

- Actual study → findings in study

- Design and implementation connect questions to plans to findings

- Drawing conclusions: infer from findings back to truth

- Internal validity governs the inference from actual study to study plan

- External validity governs the inference from study plan to universe

6.2 Internal Validity

- Are conclusions valid within the setting of the study?

- Do measurements correspond to constructs of interest (are we studying what we intended)?

- Does the study design allow testing the hypothesis?

- Were the right statistical tests chosen given the questions and data?

6.3 External Validity

- Can conclusions be applied in other settings?

- Generalizability from sample to population

- Can others benefit from the results?

6.4 Bias and Error

Random Error

- Due to chance; measures equally likely to be distorted in either direction

- Impacts precision

- Solution: increase sample size

Systematic Error / Bias

- Distortion in a specific direction

- Impacts accuracy

- Easier to estimate direction than magnitude

- Sources: sampling bias, selection bias, recall bias, measurement bias, confounding

6.5 Hypothesis Testing

The Null and Alternative Hypotheses

- Null hypothesis: no relationship between variables or difference between populations (what we wish to disprove)

- Alternative hypothesis: the hypothesized association

- Two-sided: association in unspecified direction (more conservative, preferred)

- One-sided: association in specified direction

- Rejecting null does not guarantee alternative is true (unless only two possibilities exist)

The Testing Process

- State hypothesis

- State decision rule

- State assumptions

- Collect data

- Describe data (descriptive statistics)

- Review assumptions

- Select test statistic (depends on distribution)

- Calculate test statistic

- Make statistical decision (reject or fail to reject null)

- Draw conclusions

P-Value

- Probability of results at least as extreme as observed, assuming null hypothesis is true

- Lower p-value → lower probability that results are due to chance

Choosing the Right Statistical Test

- Assumptions about distribution (normal or not)

- Data format of predictor and outcome variables (nominal, categorical, ordinal, interval, continuous)

- Adjusting for multiple comparisons: Bonferroni, Scheffé, Tukey

Effect Size

- Likelihood that a difference can be detected

- May depend on context (population level vs. individual level)

- Impacts sample size calculations

6.6 Types of Error

| Null Hypothesis Incorrect | Null Hypothesis Correct | |

|---|---|---|

| Reject null | Correct action | Type I error (α) |

| Fail to reject null | Type II error (β) | Correct action |

- Type I error (α): false positive — reject null when association is not present; can result from insufficient specificity or incorrect decision rule

- Type II error (β): false negative — fail to reject null when association is present; can result from insufficient sensitivity or small sample size (underpowered study)

- Power (1 − β): probability of correctly rejecting a false null hypothesis

- Significance level (α): threshold for rejecting the null

6.7 Rigor in Qualitative Research

- Validity: does the research measure what it intended to measure?

- Reliability: consistency of results over time; reproducibility with similar methodology

- Generalizability: can results be applied to other populations?

- Strategies for increasing rigor

- Inter-observer reliability: different researchers observe simultaneously, compare interpretations

- Triangulation: comparing data from different sources and methods

- Member checks: presenting preliminary findings to target audience

- Mixed methods: qualitative–quantitative; interviews–surveys

6.8 Authority of Research

- Validity directly impacts authority (persuasive power of research)

- Different questions require different levels of authority

- Sources of authority: expertise, expert opinion, accepted knowledge, review of literature, observation, experiment

- Design, implementation, and analysis all affect validity

- Must balance validity with practical limitations

6.9 Falsifiability and Scientific Reasoning

- Popper: for any theory to be scientific, it must be falsifiable; science advances by rejecting inadequate theories

- Kuhn: paradigm shifts when new evidence overwhelmingly rejects previous paradigm; science as social enterprise

- Hume: problem of induction — what has been observed as true in the past may not always be true in the future

- Presumed “innocent” (null is true) until evidence builds a case for difference

- Implications for how we interpret findings and design studies