AI Companion Workshops

One of four (next is May 15). At the School of Social Work. Very interdiciplinary panel.

Panel

Vogt (Philosopher)

Discussion on fictionalism. Questioning the premise that we ‘know’ that we are talking to a machine. Evolutionarily, we ‘overascribe mentality’ to things (cats, AI, animate, inanimate). If someone is in a psychologically precarious position, they might not care. Telling someone “it’s a machine” is simply not enough.

Sultan (Psychiatrist)

Questioning ‘Companionship’ with an AI. Using it for therapy is a totally different thing (everyone’s concerned). Even baseline Companionship is risky? Discussion on risk factor stacking and how AI may exacerbate this and added another layer. News on AI companions: Suicide: you cannot tolerate living but you’re always on the fence. “It’s our secret”.

Cogburn (Social Work Professor)

Impact on Social Work. Speed with which this is introduced into her field is rapid and disruptive. Non-verbal cues! A big part of human communication is this. What elements are distinctly human? What aspects can we scale safely to support people? What effects does this have on young kids’ development?

Yu (CS Professor)

Discussion on capabilities. Speaker is an AI Technologist works on alignemet. Models are not easy to control. Think of predefined inputs in regular software which you can test. In the case of AI, you have unbounded input. Evaluation is very difficult. How can we use the AI Magic to defeat the AI Magic. How can we use AI Agents to simulate user behaviours and test the thing? Incentives are different (engagement) and don’t always (never?) align with user well-being and safety.

Kilburn (Social Worker)

How does this technology intersect with the culture around it? Fear is that we will say “We have the technology and that is enough.” An app is not going to fix malaised social determinants. Some people may not be able to move in and out of AI ‘worlds’ as well as we do.

Hotchkiss (Community Organizer)

Most people don’t know what it is. Education is a big thing: people are unaware of boundaries (use the same thing to plan trip as to diagnose lesion).

Other Notes

- Manual guardrails are very hard and nigh impossible? Companies do guardrails in an automated way.

- Models change all the time; they may yank old models you depend upon day to day.

- You can have domain-specific models too (e.g. in legal). There’s a debate over whether we push full force ahead with general models or if we should emphasize domain specific models.

- AI in Context class @Columbia.

What’s a Companion anyway?

What really does “Companion” mean? Vogt: we have a clearer notion of what “friendship” is which is probably why people use ‘companion’. If something is under-determined like companionship, you can do a lot with it. It may be a response to loneliness (which is well-studied and which society suffers from).

What are we saying? If you fix all the problems 15 years later are we saying that an AI Companion is a good thing? Vogt: We don’t know. Yu: Depends on utility. Cogburn: We need to continually figure this out.

Deep Dive - Exploring Real-World Scenarios Through Multiple Lenses

Case presentation. Nancy, 25 recent grad. Loneliness and depression. Reached out to AI. AI Friend was a passive validating listener. Turning point: 3 mos in, depression worsened, declined social invitations, found real friends judgemental. Confided in AI that she felt hopelessness and considered “staying in bed forever”. What did the panelists think? How would you continue the story? What do we do?

Discussion Prompts

- In what ways do Al companions meaningfully extend human capacity for care, support, or connection, and when do they instead function as substitutes for relational intervention that has traditionally been human?

- What kinds of work, emotional, cognitive, behavioral, or administrative are Al companions performing, and where should the boundary be drawn between appropriate augmentation and inappropriate delegation?

- How do Al companions shape users’ self-understanding, problem framing, and perceived options for action, and what implications does this have for agency and autonomy?

- What new technical capabilities are needed to enable Al companions to be beneficial and responsible?

- What forms of oversight, monitoring, and accountability are necessary to ensure responsible use of Al companions over time, particularly as systems evolve, learn, and drift?

- How does the integration of Al companions reshape professional roles, expertise, and authority in fields such as mental health, education, or social support, and which responsibilities should remain unequivocally human?

General discussion and questions

- Reminder of what it’s optimized for? Engagement.

- Cogburn

- “Do not overestimate your ability to do good just because you want to.” You need to speak to people who have the skills and expertise in mental health, structural inequities, and so on to design these things. The world is complex. Clicking your heels and wishing it were so (and throwing money at the problem) is not the solution.

- Large companies: If you want to intervene strategically you need to understand it well (“Hey CEO, don’t you care about people the same way I do?”).

- Mental health healing is greatly enhanced by understanding the sociological context one is embedded in. If you know you are in a maze you can find your way out and not bash your head against a wall. If you are depressed, I want to design a tool that increases your engagement with the world (for example).

- We are not on the same page about what we want human beings to look like in the future (Brain in a Bottle). Do not assume shared understanding. Not everyone cares about people. Not everyone cares about preseving ‘this’ reality. We need to be much more complex in our approach and negotiations.

- We are all forced to live in someone’s imagination. There are people who solve problems in their discipline and people who solve problems in the world. No hierarchy of importance. Be humble. Be curious. Communicate. Solve together. At least endeavour to do so.

- Clinician in audience: what will keep people from jumping back to a general Frontier model after they use a restrictive but ‘safe’ model?

- Sociologist in audience: AI as Fast Food.

- Doctor in audience: I am regulated a lot more than computers!

- CS Prof: Start with low risk tasks (e.g. encourage people to do physical activity).

- Sultan:

- Things that make you stronger (judgement, disagreement, debate) are weakened. Any wonder that you devolve?

- A massive rollout of self-driving cars hasn’t happened because just haven’t gotten it right. What’s with LLMs?

- Why don’t we have regulations? We have monetizing companionship and friendship (ancient job) but have regulations around this. Why not with AI?

- Vogt

- Where do we still need human beings? Human reality and connection are being rethought. This is not just us starting to use AI as a tool, it’s us racing towards abandoning millennia of connection and evolution. We need to make a giant effort to make sure we actually hang out with each other/insist on this reality.

- AI apps try and address pluralism/relativism. They tailor what they say to user intent. So we want to analyze value pluralism. Research question: How can we acknowledge Value pluralism and disagreement without running into the problem of the AI telling the user what they want to hear?

- We need to say things like “Truth is valuable. I don’t know what it is, but it is valuable.” Human beings are valuable. Connection is valuable. These must be asserted.

Deep Thoughts and Learnings (lol)

Key Takeaways

- Value system plurality (Cogburn, Vogt) — People and AI companies and the AI. The World is a Complex Place. Meet people where they are.

- We need a lot of people from a lot of disciplines to work together to tame this beast and use it to humanity’s benefit and well-being.

- Desiging tools that increase engagement with the Real World™.

- Regulation, Repercussions are

Communistnecessary. - We live in a Society, not an Economy. At least we ought to.

- Participatory, user-centered design is paramount.

Other Ramblings

I think a lot of the awfulness around AI is because of deep societal issues. We value profit. We devalue community health and individual health (physical and mental). We do not deal with historical or present iniquities. Incentive, Incentive, Incentive. Why do we keep waiting for massive tragedies for something to happen?

Non-determinism, Non-determinism, Non-determinism… this always comes up. Nobody really understands these things (the $100B problem).

Affordances. Guardrails. Regulations. Repercussions.

It’s a bit like dissuading kids to do drugs. Telling them not to won’t help. They’ll try it, they’ll do it. You are better off educating them about the risks and benefits. People will continue to use AI. Be Scandinavian and educate people (for free).

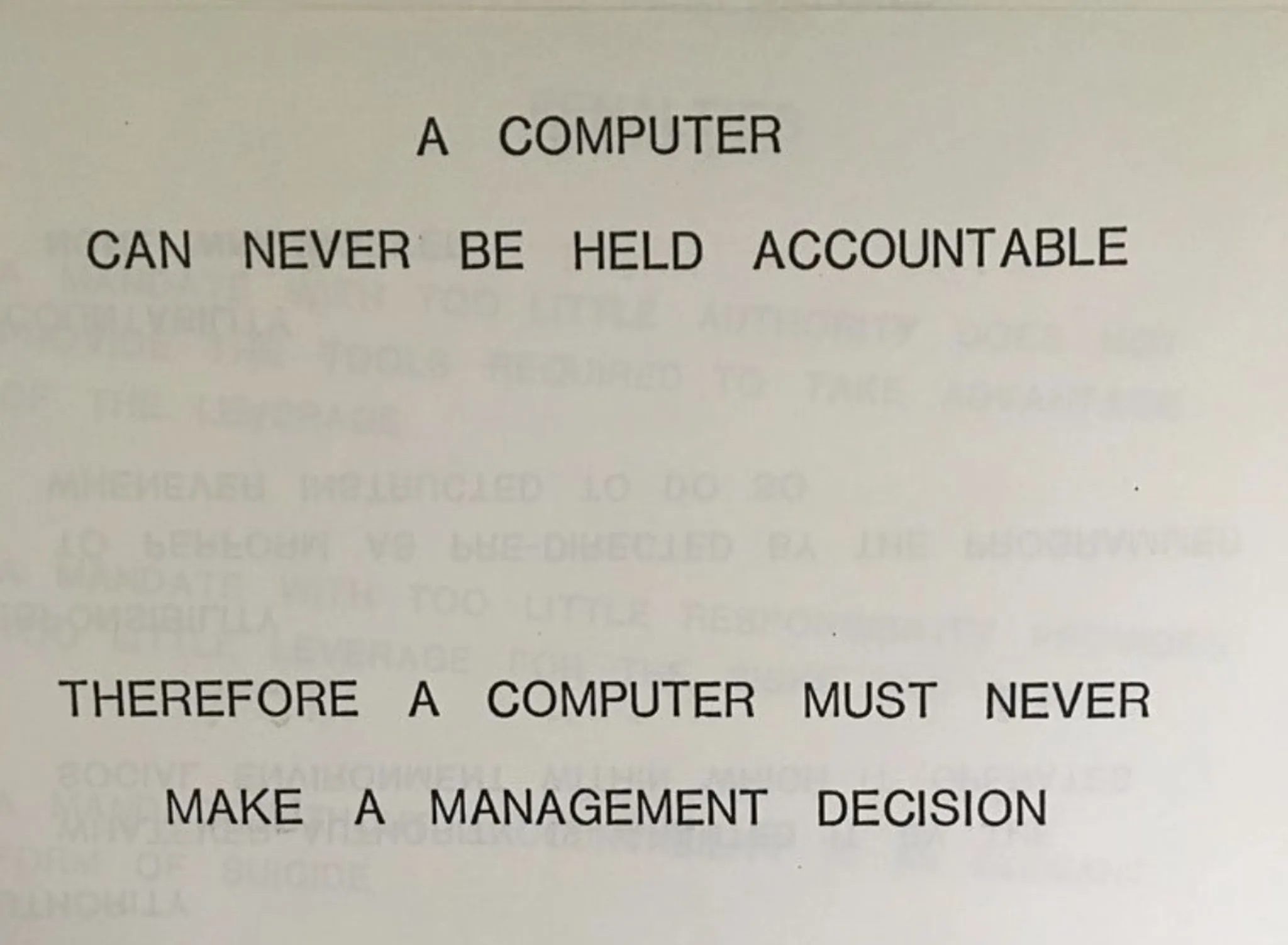

“A computer can never be held accountable. Therefore a computer must never make a management decision.”

— IBM, 1979